In everyday communication, we use thousands of non-verbal signals: facial reactions, intonations, gestures, and posture — to convey our emotions and feelings. Did you know that only 7% of information about a person is expressed verbally and 93% non-verbally? That said, almost 65% of the meaning of a particular message is delivered non-verbally.

This data was obtained through research by Albert Mehrabian, Professor Emeritus of Psychology at the University of California, Los Angeles, and American anthropologist Ray Birdwhistell.

That’s all clear. But you may ask: how does this apply to my business?

It’s all about the missed opportunity. Getting the correct meaning of the emotions of your clients or partners brings many benefits. Here emotion recognition technology also referred to as Affective Computing, comes in handy.

Emotion recognition software powered by Artificial Intelligence and Machine Learning interprets human emotions from non-verbal visual data. By leveraging those unspoken reactions, businesses can better understand their customers. As a result, they can improve the customer experience and increase their profits.

In the article, we will find out what emotion recognition is, how it works, and where one can use it. Let’s start!

What Is Emotion AI?

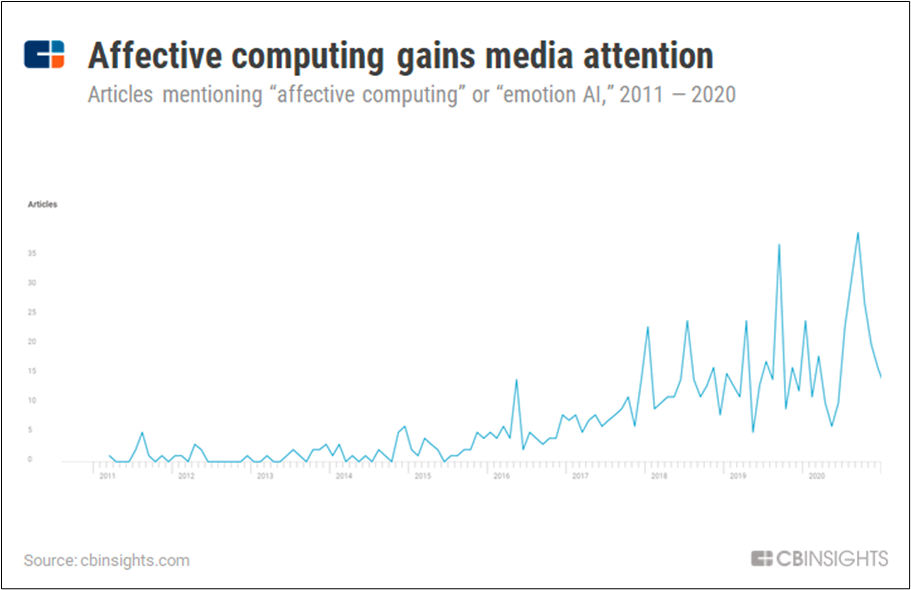

As a natural progression of facial recognition technology, emotion recognition is a field of computer vision that is gaining more and more attention in mass media. It involves facial emotion detection and the automatic assessment of sentiment from body language, voice patterns, gestures, facial expressions, and other non-verbal signals.

The technology comes from the model of six universal expressions, proposed by Paul Ekman, an American psychologist and a pioneer in studying emotions and their relation to facial expressions. According to the model, there are seven universal facial expressions of emotions: anger, contempt, disgust, enjoyment, fear, sadness, and surprise.

Emotional AI Use Cases

Emotion AI products and solutions are incorporated into many industries to help deliver an emotionally rich experience. It can aid in the diagnosis of mental and neurological disorders in healthcare. Help educators engage students more in the lessons they are teaching. Assist talent acquisition specialists in locating the best prospects for hiring.

Let’s explore the most common industries utilizing emotion detection solutions.

Healthcare

Medical centers use AI facial recognition to determine patients’ emotions in the waiting rooms. That helps doctors prioritize those patients who are feeling worse and get them to appointments sooner.

Another project is designed to help children with autism spectrum disorder understand the feelings of others. The system is installed on Google Glass. When another person is near the child, the glasses use graphics and sound to suggest that person’s emotions. Tests have shown that children socialize faster with such a “digital advisor”.

Finance & Banking

The typical use case in the finance sector is banking apps with the integrated functionality of Emotion AI. It uses the already embedded sensors to detect facial expressions. You can utilize eye tracking, as front-facing cameras on recent smartphones may now be used to precisely track a user’s gaze position. If the customers’ attention is lost and they begin to roll their eyes away, then that is something to notice and make changes.

Recruitment

Another area in which emotion detection technology is in demand is recruiting. Large companies are implementing artificial intelligence to monitor employees’ behavior and psychological state. Cameras with video analytics modules installed in the office can detect signs of stress among employees and give alerts to HR departments.

Current State

Despite the many benefits of technology, there are some concerns too. The emotion recognition disputes growth has been influenced by privacy and transparency issues, racial bias danger, and ethical considerations.

In May, Microsoft announced it would stop allowing broad access to cloud-based AI technology that infers people’s emotions. But it turns out that the corporation will keep its emotion identification capacity in an app used by persons with eyesight loss, despite their acknowledgment that technology has “risks.”

Microsoft and Google will continue to include AI-based capabilities in their products despite rising worries over creating and applying “controversial” emotion recognition in software applications.

Since we are aware of these issues, why is there such a massive demand for emotion detection technology? Remember a rule: only how technology is utilized determines whether it is intrinsically good or bad. And many other sectors, as mentioned above, are already gaining from the use of emotion recognition software.

So, does emotion recognition matter to your industry? Despite doubts around the overall technology concept, this does not imply that the technology isn’t worth the effort. In fact, the opposite is true: as the demand for emotion recognition expands, facial recognition models and algorithms will evolve and keep getting advanced, secure, and more applicable to real-life situations.

How to Build an AI Emotion Recognition Solution?

Here comes the Intetics AI and ML Center of Excellence, created to help you find a solution to your bold technological ideas.

We accumulated expertise in various technologies, unique customer project experiences, efficient methodologies, and best practices. Let’s train your program to investigate, assess, foresee, identify, and communicate!

Here is the tech stack we use in AI/ML projects for our clients:

- TensorFlow

- StanfordCoreNLP

- CatBoost

- Scikit-Learn

- NLTK

- Keras

- GPT-2

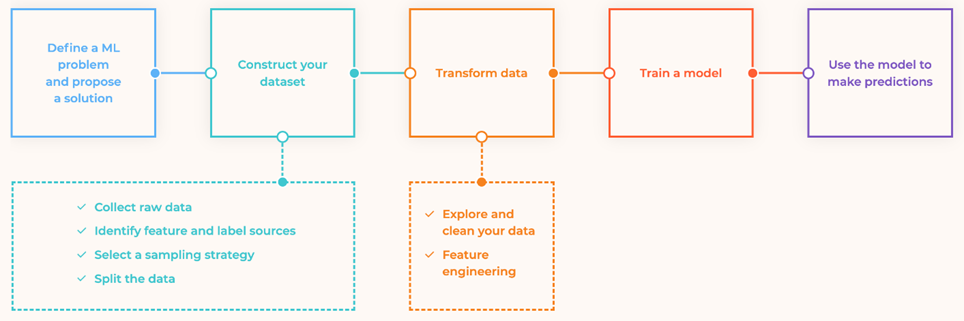

And this is how your machine will learn:

Check Your Emotions and Get Advice with Our Fun Demo

Just follow the link emotions detection AI demo.

Follow the path of companies prioritizing AI breakthroughs that recognize, understand, and react to human emotions while fostering more robust customer relationships.

Still wondering how to apply emotion recognition solutions for your industry to add extra value to customer experience? Reach out today and step up your Emotion AI journey.